Web scraping activities are now considered to be of the most efficient ways a business can cater to their prospect's needs. ‘’Get Exactly what you want. 100% satisfaction guaranteed. Crawl and extract data from any website.â€

To succeed in the market, especially when the market keeps fluctuating, web scraping is such a unique solution that helps brands to access the information which can turn their business current solution into an improvised vision of what their prospects expect from them.Â

The rise in web scraping grew more in demand over the years because of the increase in cyber-attacks online. We all are aware of how the online platform offers vast information for any brand like yours to apply and incorporate during your research, but are you aware that not every data you view online are of good use for your business?

To find quality data online is difficult because of the high risk associated with the online world, such valuable sources have created a limited barrier on who can access their data. Understanding this, web scraping grew in demand as it was such a solution who could help to scrape all that information without the website owners even have the slightest clue.

However, the online world risks never shortened, and web scraping to fell in the light of getting blacklisted due to being identified as suspicious activities. Despite this, web scraping can be still be conducted if the right precautions are conducted.

Follow all the precautions listed in this article and find yourself conducting efficient web scraping activities without any hindrance.

Post Quick Links

Jump straight to the section of the post you want to read:

WHAT DO YOU MEAN BY WEB SCRAPING?

Web scraping is the process of extracting information from any source, website or server that holds information. When quality data is so difficult to be retrieved from the online platform, web scraping is a unique solution which helps brands like yours to scrape such data without any hassles.

The reason why web scraping is garnering multiple attentions by brands is that:

1 . It can conduct anonymous scraping of data anytime

2 . It is much quicker in its process

-

The data being retrieved is accurate and from a reliable source

-

It helps to scrape data so that brands can work in refining their current solution

Web scraping doesn’t consume much of a user’s time. All you need to do is click on the source you want to scrape, commence the scraping activity and then the data gets saved in your system in the format you would like to view it such as CSV file and much more.

Web scraping is great for business because it can help multiple brands like yours to get quality data and then provide you with the opportunity to apply those solutions in the solution you are selling. For instance, what would happen if you scrape your competitor's website? The information you retrieve from it will help you to include all the missing factors of theirs and convert your solution as the ultimate one which prospects have been looking for.

There are many web scraping applications that are being sold in the market and if you are looking to conduct this action regularly, then you will need to take care so that such actions never put you in the trap of getting blacklisted.

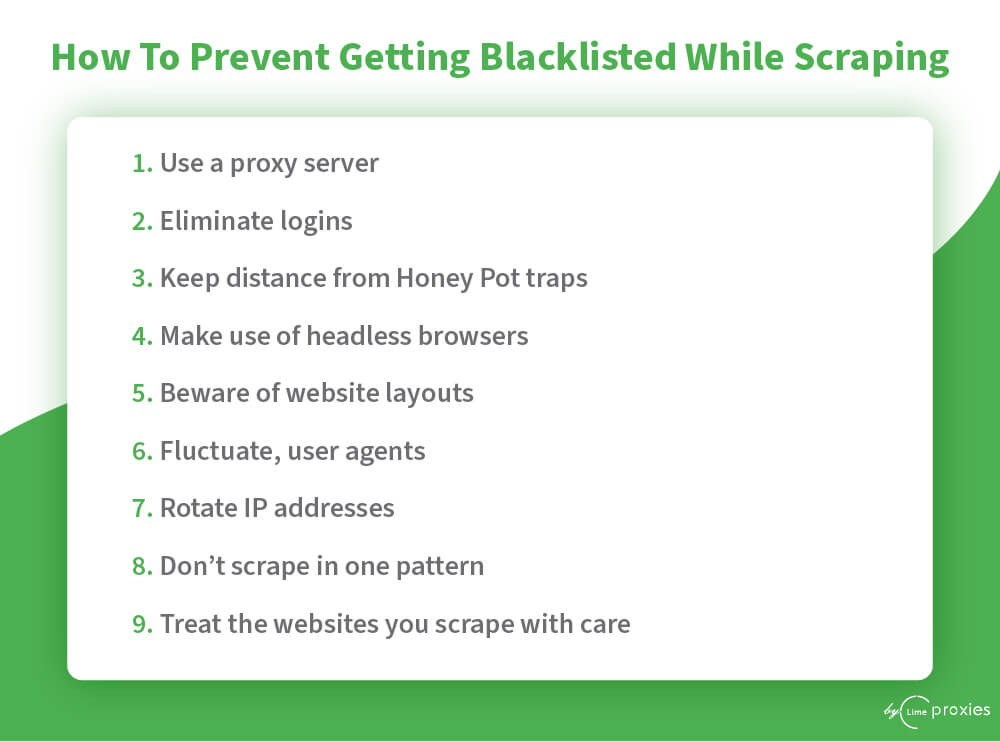

HOW TO PREVENT GETTING BLACKLISTED WHILE SCRAPING?

1. USE A PROXY SERVER

A proxy server is an application where you can access any information you need from a particular source, website or server without having to reveal your identity. When you want to scrape a website the reason why multiple users get blocked is that their IP address gets revealed, proxy servers are designated to eliminate that from happening.

While you conduct your web scraping activities, let proxy servers function along with it so that your brand stands away from the risk of getting blacklisted while scraping.

2. ELIMINATE LOGINS

Another way where your web scraping activity can be detected is when you try to conduct this action with websites who have logins. The website you wish to access has login protection and so when you try to access such pages you would be sending some kind of information or cookies that showcases the website owners from where the access is coming from.

When the website owner realizes that the request is coming multiple times from the same IP address, you get blocked. It’s wiser to avoid web scraping from pages who have logins so that you don’t fall in the risk trap.

3. KEEP DISTANCE FROM HONEY POT TRAPS

The concept with honey pot traps is that it is installed for the purpose of capturing hackers and users who want to access information but are not authorized to do so. It is an application that duplicates a real system where the links are not seen by normal users but can be witnessed by web scrapers.

When you see that it is better to take a step back, because, once you try to go further you will fall in its trap and get blocked easily. The only way to break through this barrier is to conduct some serious programming tactics which in itself is difficult and hard to crack.

4. MAKE USE OF HEADLESS BROWSERS

Headless browsers help you to scrape information by attempting a one-line command interface. It functions like any other browser but the only thing is it doesn’t show any graphical interfaces. The reason why using a headless browser is necessary because when you want to scrape from a website the content presented on such websites would differ and the reason being they depend highly on JS codes and CC. Since they are not the actual way how an HTML link would display information, chances are your web scraping activity can be blacklisted.

Avoid this from happening with the use of a headless browser since many of the information you wish to scrape falls under the browser information.

5. BEWARE OF WEBSITE LAYOUTS

Another way you can prevent yourself from getting blacklisted while scraping information is to check whether the websites you are scraping have different layouts. This is a tricky tactic because when you don’t scrape according to the layouts listed, you can be detected as suspicious and hence blacklisted. Avoid that by ensuring that you are aware of all the website layouts and are scraping according to that format.

6. FLUCTUATE, USER AGENTS

User agents are a tool that informs a server on which website web browser is being used. So when you don’t activate the user agent, the website content you want to view will not be displayed to you. So when you switch on your user agent what happens is the request you send to view a website will have the user-agent header and so when that header reflects numerous times chances are you fall under the alert of being showcased as suspicious.

Avoid that by ensuring that you are constantly fluctuating your user agents so when you request to view the website, the user agent header differs each time.

7. ROTATE IP ADDRESSES

IP addresses are the main reason how a website can detect whether the user accessing the information is authorized or not because it gives away the location of the user. When you want to conduct web scraping activity on a more regular basis ensure that your IP addresses are always rotating. As mentioned earlier, proxy servers can help to get this sorted since many proxy servers offer many IP addresses.

8. DON’T SCRAPE IN ONE PATTERN

Another way where you can avoid getting blacklisted while scraping is to keep changing the way you conduct your scraping activities. When you scrape a website it usually takes one pattern to conduct it which makes you seem suspicious and hence get you blacklisted. Avoid that by scraping as a human would. For instance, read the information a little slower, move the mouse, click on different pages. All of this helps to portray that you are a human viewing the information and not a bot.

9. TREAT THE WEBSITES YOU SCRAPE WITH CARE

A human would never treat a website vigorously. Similarly, when you scrape avoid conducting it continuously. When you start to scrape the process itself it's so quick that a website owner will automatically realize that it is a bot conducting the scraping activity. Avoid such a suspicious activity from taking place by taking a break from your scraping activity. Scrape just a little information and then wait for another few seconds. Also, when scraping ensure that it slows down a bit because as a human you can’t possibly read all that information in a minute it takes time.

About the author

Rachael Chapman

A Complete Gamer and a Tech Geek. Brings out all her thoughts and Love in Writing Techie Blogs.

View all postsRelated Articles

Datacenter vs Residential Proxies: Which Should You Choose in 2026?

Datacenter proxies are faster and cheaper for most tasks. Residential proxies handle heavily bot-protected sites. This guide breaks down every difference so you pick the right type — and avoid overpaying.

How to Use Proxies for E-Commerce Price Monitoring in 2026

Proxies are the backbone of reliable e-commerce price monitoring. Discover how to track competitor prices at scale, beat anti-bot systems, monitor geo-specific pricing, and build a full price intelligence stack in 2026.

Web Scraping With Proxies: The Complete Guide for 2026

Web scraping with proxies lets developers and businesses collect data at scale without IP bans or rate limits. This complete 2026 guide covers Python setup, proxy rotation, tool comparisons, anti-bot tactics, and ethical best practices.